The pattern is consistent enough across industries and organisation sizes that it has become one of the defining facts of enterprise technology adoption in the 2020s. An organisation invests in artificial intelligence. The technology works. The models perform well in testing. The infrastructure is sound. The data pipelines run. And then, somewhere between the proof of concept and the point where the technology was supposed to change how the business operates, the initiative stalls, fragments, or quietly fails.

The explanation offered most often is that AI is complex, that the talent is hard to find, that the data is messier than expected. These are real factors. They are not the primary cause. The primary cause, documented now across enough case studies and survey data that it has become difficult to dispute, is that the organisation did not have the governance structures to absorb what it was building.

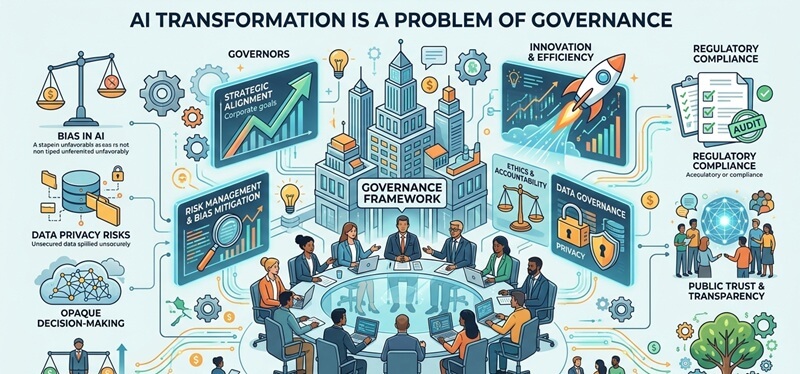

AI transformation is a problem of governance. Not primarily of technology, not of data quality, not of algorithmic sophistication. Governance. The structures that define who owns a decision, who is accountable when that decision produces harm, who has the authority to stop a system that is producing wrong outputs, and who ensures that what the AI is doing aligns with what the organisation says it stands for.

This article is about why that is true, why it is harder to fix than most governance frameworks acknowledge, and what the organisations that are actually succeeding at responsible AI transformation are doing differently. The argument is not that technology does not matter. It is that technology without governance is not a solution. It is infrastructure with no one in charge.

Contents

- 1 The Core Argument: What Governance Actually Means in the Context of AI

- 2 Why AI Specifically Creates Governance Problems That Previous Technologies Did Not

- 3 Why Governance Fails Even When Organisations Know It Matters

- 4 The Governance Acceleration Paradox: Why Governance Enables Rather Than Constrains

- 5 What Mature AI Governance Actually Looks Like in Practice

- 6 Traditional AI Governance vs Mature AI Governance: A Practical Comparison

- 7 The Regulatory Dimension: What Organisations Must Govern That They Cannot Choose to Ignore

- 8 The Human Dimension of AI Governance: What Frameworks Cannot Fully Capture

- 9 What Organisations Should Actually Do: A Practical Starting Point

- 10 Frequently Asked Questions

- 11 Conclusion

The Core Argument: What Governance Actually Means in the Context of AI

Governance is one of those words that everyone agrees is important and almost no one defines precisely enough to be useful. In the context of artificial intelligence, it is worth being specific.

Governance is not the same as compliance. Compliance asks whether the organisation is following the rules that exist. Governance asks whether the organisation has built the structures to make good decisions in areas where the rules either do not yet exist or cannot be applied mechanically. AI sits almost entirely in that second category. The rules are incomplete, evolving, and frequently contested. Governance is what fills the gap between what regulation mandates and what responsible operation requires.

Governance is also not the same as oversight, though oversight is part of it. Oversight is reactive: it monitors what is happening and intervenes when something goes wrong. Governance is structural: it defines the decision rights, accountability lines, and review processes that shape how AI systems are built and operated before the problems arise. An organisation with only oversight and no governance will always be reacting. An organisation with genuine governance structures will be preventing.

In practice, AI governance answers four questions that every deployed AI system should have clear answers to. Who decided to build this? Who is responsible for what it does? Who has the authority to change or stop it? And who speaks for the people affected by it? When those four questions have clear, documented, and enforceable answers, the organisation has the foundation of AI governance. When they do not, it has a technology project without a steward.

The Deloitte Global Boardroom Survey published in 2025 found that 66 percent of boards still report limited or no AI expertise among their directors. Only 14 percent discuss AI at every board meeting. These numbers are not just a commentary on board composition. They are a direct indicator of the governance gap: the people with ultimate organisational accountability are largely not equipped to exercise meaningful oversight of one of the most consequential sets of decisions their organisations are making.

Why AI Specifically Creates Governance Problems That Previous Technologies Did Not

It is worth asking why AI poses a governance challenge that previous waves of enterprise technology did not, at least not at the same scale or with the same urgency. The answer lies in several properties of AI systems that are genuinely different from the software that organisations have been governing, imperfectly but adequately, for decades.

AI Makes Decisions That Previously Required Human Judgment

Traditional enterprise software executes instructions. It does what it is told, within the parameters it is given. AI systems, particularly those based on machine learning, make inferences. They produce outputs that no one explicitly programmed, derived from patterns in data that no human fully mapped. When an AI system decides which loan application to approve, which job candidate to advance to interview, which patient to flag for early intervention, or which content to amplify on a platform, it is exercising a form of judgment that was previously the exclusive domain of human decision-makers with defined accountability.

The governance problem this creates is not that the AI is necessarily making worse decisions than humans. In many cases it makes better ones. The problem is that the accountability structures that existed for human decision-making do not automatically transfer to AI decision-making. When a loan officer denies an application, there is a person who made that decision, who can explain it, and who can be held accountable for it. When an AI model denies an application, the accountability is distributed across the data team that built the training set, the engineering team that deployed the model, the business unit that defined the objective function, and the executive who approved the deployment. That distribution of accountability can easily become a vacuum of accountability.

AI Scales Errors in Ways That Human Systems Do Not

A human decision-maker who applies a biased criterion to 20 decisions per day affects 20 people. An AI model applying the same criterion to 20,000 decisions per day affects 20,000 people before anyone notices the pattern. The scale amplification property of AI means that governance failures that would be manageable in a human system become systemic problems very quickly. This is not a theoretical concern. The healthcare systems that applied algorithmic triage models and inadvertently disadvantaged Black patients, the hiring platforms that deprioritised women’s applications for technical roles, and the insurance pricing models that correlated race with premium levels through proxy variables all demonstrate the same dynamic: a governance failure that would have been caught and corrected in a slower system was amplified to scale before the problem became visible.

AI Operates Across Organisational Boundaries in Ways That Confuse Accountability

Most enterprise AI systems are not built entirely in-house. They use foundation models from major technology providers, data from third-party sources, infrastructure from cloud vendors, and monitoring tools from specialist software companies. Each layer of this stack introduces a party with some responsibility for the system’s behaviour and no complete accountability for the whole. When something goes wrong, the question of whose governance failure it represents becomes genuinely difficult to answer. The EU AI Act addresses this in part by creating liability frameworks for developers, deployers, and users. But regulatory frameworks follow technology deployment, and the gap between what the law requires and what responsible governance demands is significant.

AI Erodes Existing Governance Structures Rather Than Simply Operating Outside Them

This is the dimension that most governance frameworks underestimate, and it is perhaps the most important one. AI does not just add new decisions that require new governance. It changes the nature of existing decisions in ways that can make existing governance structures less effective. When middle managers use AI tools to make performance assessments that they then present as their own judgment, the organisation’s existing performance management governance is not bypassed. It is corrupted from within. The accountability structure says the manager is responsible for the assessment. The actual mechanism producing the assessment is an AI system that no one in the governance chain can examine or interrogate. This pattern, where AI is formally invisible in decision chains that are formally governed, is one of the most significant and least discussed governance challenges of the current moment.

Why Governance Fails Even When Organisations Know It Matters

Most of the organisations that are struggling with AI governance are not struggling because they do not understand that governance matters. Most of them have published responsible AI principles. Many have established AI ethics committees. A significant number have appointed Chief AI Officers or equivalent roles. Yet the gap between principled statements about AI governance and operational governance that actually shapes how AI systems behave remains wide. Understanding why that gap persists is essential to closing it.

Governance Is Treated as a Post-Deployment Activity

The most consistent structural failure in enterprise AI governance is that governance processes are applied after the system has been built rather than embedded in how it is designed. An ethics committee that reviews a deployed model and identifies bias problems is performing damage assessment, not governance. By the time a system is in production, the training data has been fixed, the objective function has been defined, the architecture has been chosen, and the costs of making fundamental changes are high. Genuine AI governance must begin at the requirements stage, before a single line of code is written or a single data source is assembled. The question of whether a given AI application should be built at all, and under what constraints, is a governance question that only has meaningful impact when asked before the investment has been made.

The Incentive Structures Actively Undermine Governance

The people who are evaluated and rewarded for deploying AI systems quickly are not the same people who bear the consequences when those systems cause harm. Product teams are measured on shipping velocity and feature adoption. The people affected by a biased hiring model or an erroneous medical algorithm typically have no representation in the incentive structure of the team that built it. This creates a systematic bias toward deployment speed over deployment quality that no amount of governance principle articulation can overcome unless the incentive structures change. Organisations that have successfully embedded AI governance have almost always paired governance requirements with performance frameworks that reward adherence to them, not just the achievement of deployment milestones.

Governance Expertise and Technology Expertise Are Siloed

The people who understand AI systems well enough to identify their failure modes are typically not the people who understand the regulatory, ethical, and organisational dimensions of those failure modes. Legal and compliance teams understand the regulatory landscape but often lack the technical literacy to evaluate whether a model’s behaviour complies with the principles they are asked to enforce. Data science teams understand the technical architecture but often lack the contextual knowledge to assess the social consequences of what they are building. The governance failure is not in either group. It is in the space between them, where the technical and the normative need to be integrated and where most organisations have no functional bridge.

Shadow AI Makes Governance Structurally Difficult

Shadow AI, the use of AI tools by employees without organisational approval or oversight, is one of the fastest-growing governance challenges in enterprise technology. According to multiple surveys published in 2024 and 2025, a substantial majority of employees in large organisations are using AI tools, including generative AI systems, in ways that their organisations are either unaware of or have not explicitly sanctioned. The governance problem this creates is not primarily one of policy violation. It is one of systemic opacity. When the inputs that shape consequential decisions are partly generated by AI systems that exist outside the governance perimeter, the governance structures applied to the visible parts of the decision chain are protecting a perimeter that no longer accurately defines where the decisions are being made.

The Governance Acceleration Paradox: Why Governance Enables Rather Than Constrains

The most persistent obstacle to AI governance adoption is the belief, widely held among technology teams and product organisations, that governance slows innovation. The evidence does not support this belief. It contradicts it.

The organisations that have built mature AI governance frameworks consistently report that governance does not reduce their deployment velocity over meaningful timeframes. It increases it. The mechanism is straightforward. A team that deploys an AI system without adequate governance review will encounter the consequences of that omission later: regulatory penalties, reputational damage from a bias incident, the cost of retraining a model on better data, or the complexity of retrofitting explainability into a system that was built without it. Each of these consequences consumes more time and resource than the governance process that would have prevented them.

Governance also accelerates deployment by reducing uncertainty. One of the primary sources of deployment delay in enterprise AI is the lack of clear decision rights. When it is unclear who has the authority to approve an AI deployment, when legal and compliance teams have no established framework for evaluating one, and when no one can articulate what the organisation’s risk tolerance is for a given application category, projects stall in review cycles that have no defined endpoint. A governance framework that establishes clear approval pathways and explicit risk criteria does not create delay. It replaces indefinite delay with a predictable, bounded process.

The research from multiple governance practitioners supports what the Nadcab analysis describes as the governance acceleration paradox: structured AI governance frameworks accelerate innovation rather than constraining it by removing uncertainty, speeding decision-making, building internal trust, and enabling scalable deployment across organisational units. The organisations that treat governance as infrastructure rather than overhead are systematically outperforming those that treat it as a compliance cost.

What Mature AI Governance Actually Looks Like in Practice

Most articles on AI governance describe the principles that governance should embody: accountability, transparency, fairness, explainability, privacy, security. These principles are correct and necessary. They are also insufficient on their own. Principles become governance only when they are operationalised into specific structures, processes, and accountabilities that shape actual decisions in the real world. What follows is a description of what that operationalisation looks like in organisations that have moved beyond aspirational statements.

Tiered Risk Classification for AI Use Cases

Mature AI governance does not treat all AI applications with the same level of scrutiny. It establishes a risk tier framework that applies proportionate governance to the potential impact of each system. A low-risk application, such as an AI tool that suggests document formatting or filters spam, may require only a basic documentation requirement and a periodic review. A high-risk application, such as an algorithm that influences hiring, lending, medical treatment, or criminal justice outcomes, requires pre-deployment bias assessment, explainability documentation, defined audit trails, and board-level awareness at minimum. The EU AI Act provides a regulatory version of this tiering framework, but organisations operating responsibly are implementing their own tiering systems that go further than the regulatory floor in areas where their stakeholders or ethical commitments require it.

Defined Decision Rights for Every AI System

Every AI system in deployment should have a documented answer to four questions: who approved the deployment, who owns the ongoing performance and compliance of the system, who has the authority to modify it, and who has the authority to shut it down. These decision rights should be recorded, reviewed at defined intervals, and updated when organisational structures change. The absence of documented decision rights is the single most reliable predictor of governance failure in AI systems, because when something goes wrong with a system that has no named owner, the response is paralysis rather than action.

Data Governance as a Prerequisite, Not a Parallel Track

AI governance cannot be separated from data governance because an AI system’s behaviour is a direct function of the data it was trained on and the data it operates on in production. An organisation that has strong AI governance principles but weak data governance has a false sense of security. The training data defines the model’s implicit assumptions about the world. If that data is biased, incomplete, or unrepresentative, the most sophisticated model architecture and the most thorough post-deployment monitoring cannot fully compensate. Data governance, including data quality standards, data lineage documentation, and access controls on training data, must be a prerequisite for AI deployment rather than a parallel initiative.

Board-Level AI Literacy as a Governance Requirement

The Deloitte survey finding that two-thirds of boards have limited or no AI expertise is not simply a talent gap. It is a governance gap. Boards that lack the technical literacy to ask meaningful questions about AI risk cannot exercise genuine oversight. They can receive reports, but they cannot evaluate whether those reports reflect the reality of what the AI systems are doing. Building AI literacy at the board level does not require directors to become data scientists. It requires them to understand enough about how AI systems produce outputs to ask the questions that reveal whether governance is working: What data was this model trained on? What does the organisation know about where it fails? Who would know first if it produced a discriminatory output? What would happen in that case? These are governance questions, not technical ones, and they are not being asked consistently enough in most boardrooms.

Incident Response Procedures for AI Failures

One of the clearest indicators of governance maturity is whether an organisation has a defined, practised incident response procedure for AI system failures. This means not just technical failure, such as a model going offline, but consequential failure: a model that produces an output causing harm to a customer, an algorithmic decision that is challenged as discriminatory, or an AI-assisted process that produces regulatory non-compliance. The organisations that respond well to these incidents are the ones that defined the response protocol before the incident occurred. Those that respond poorly are the ones that are determining the protocol in real time, under public scrutiny, with no preparation.

Traditional AI Governance vs Mature AI Governance: A Practical Comparison

The table below maps the gap between how AI governance typically operates in organisations that have not yet matured their approach and what it looks like in those that have.

| Governance Dimension | Traditional Enterprise AI | Mature AI Governance |

| Accountability Structure | Fragmented across IT, legal, and business units | Unified ownership with named accountability at each layer |

| Decision Rights | Unclear or informal. AI decisions rarely documented | Explicit and documented for each AI use case and risk tier |

| Risk Assessment | Ad hoc, post-deployment, or compliance-driven | Pre-deployment, tiered by impact, integrated into build process |

| Ethical Oversight | Occasional review by legal or ethics committee | Embedded in product and data workflows from design stage |

| Explainability Requirement | Optional or aspirational | Required for high-impact decision systems as standard |

| Board Visibility | Reported occasionally, rarely in operational detail | Regular structured reporting on AI risk and performance |

| Regulatory Alignment | Reactive, addressed when violations arise | Proactive, mapped to EU AI Act, sector-specific requirements |

| Data Governance Link | Separate function, loosely connected to AI | Integrated. Data governance is a prerequisite for AI deployment |

| Incident Response | No defined protocol for AI failure or misuse | Defined escalation, containment, and remediation procedures |

| Innovation Impact | Governance seen as constraint. Teams work around it | Governance enables faster deployment by reducing rework and risk |

The Regulatory Dimension: What Organisations Must Govern That They Cannot Choose to Ignore

AI governance is not entirely a voluntary exercise. The regulatory landscape is evolving rapidly and, in several jurisdictions, has already produced binding requirements that make governance failures directly consequential.

The EU AI Act, which entered into force in 2024 and is phasing in its requirements through 2026 and 2027, establishes the most comprehensive regulatory framework for AI governance currently in effect anywhere in the world. It classifies AI applications by risk, prohibits certain applications entirely, and establishes specific conformity assessment, transparency, and audit requirements for high-risk systems. Organisations operating in EU markets or deploying AI that affects EU citizens are subject to these requirements regardless of where they are headquartered.

The United States presents a more fragmented regulatory picture. The America’s AI Action Plan, as analysed by the Harvard Edmond and Lily Safra Center for Ethics, advocates for reduced federal regulation and a pro-innovation stance that places greater responsibility on corporate boards and senior management to manage AI risks voluntarily. This deregulatory approach does not reduce governance requirements. It redistributes them. Organisations operating in the US market that choose to interpret the absence of comprehensive federal regulation as permission to de-prioritise AI governance are making a strategic error. The Caremark line of legal cases establishes board liability for failing to oversee mission-critical operational risks. As AI becomes mission-critical, the legal exposure from governance failure grows regardless of whether that failure is also a regulatory violation.

Sector-specific requirements in healthcare, financial services, and employment are already producing enforcement actions against AI deployments that failed to meet existing anti-discrimination, consumer protection, and patient safety obligations. The NIST AI Risk Management Framework provides a voluntary but increasingly referenced standard for AI governance practices in the United States, and organisations that build their governance frameworks against it are better positioned both for regulatory scrutiny and for the voluntary audits that institutional investors and major customers are increasingly requesting.

The Human Dimension of AI Governance: What Frameworks Cannot Fully Capture

There is a dimension of AI governance that formal frameworks tend to underrepresent, which is the human experience of organisations where AI decision-making is poorly governed. The people most affected by ungoverned AI are rarely in the room where the governance decisions are made.

The employee who discovers that their performance assessment was generated by an AI tool their manager did not disclose has a governance concern that no framework document addresses directly. The loan applicant who was declined by an algorithm and cannot get an explanation that helps them understand what to do differently faces a transparency failure that a principle of explainability does not automatically remedy. The patient whose diagnostic pathway was influenced by a model that performed less well on their demographic without anyone in the clinical system being aware of that performance gap has been harmed by a data governance failure that no one in the governance chain was monitoring.

These human consequences are not abstract. They are the actual stakes of AI governance failure, and keeping them concrete is one of the most important things governance practitioners can do to maintain the urgency that governance requires. Governance frameworks that are built primarily around regulatory compliance and reputational risk management will eventually produce governance structures that protect the organisation from the consequences of AI failure rather than protecting the people whom the AI affects. That distinction is not semantic. It determines whose interests the governance structures actually serve.

The organisations that are leading in responsible AI transformation are the ones that have built mechanisms for the affected populations to have structured input into governance decisions. This does not mean that customers or employees design AI systems. It means that their interests are formally represented in the governance process, that their feedback channels are monitored as governance inputs rather than customer service issues, and that their experience of AI-driven decisions is treated as evidence about whether the governance is working.

What Organisations Should Actually Do: A Practical Starting Point

The literature on AI governance is extensive and the principles are well established. What most organisations struggling with AI governance need is not a more sophisticated framework but a more honest assessment of where their current governance actually breaks down and a prioritised set of actions that address those specific failures.

The first and most important action is to conduct an honest inventory of AI systems already in deployment. Most large organisations have more AI systems operating than their governance structures are aware of. This includes shadow AI tools used by employees, vendor platforms with embedded AI components that were not explicitly evaluated as AI systems at procurement, and legacy algorithmic systems that predate the current governance conversation but that are making consequential decisions. You cannot govern what you cannot see.

The second action is to assign named ownership to every AI system in the inventory. Not departmental ownership, where accountability dissolves across a team. Named ownership, where a specific individual is responsible for the system’s performance and compliance. This single structural change, converting anonymous ownership into personal accountability, has more immediate governance impact than almost any framework document.

The third action is to define the organisation’s risk tier criteria and apply them to the existing inventory. This identifies which systems require immediate governance attention, which need moderate review, and which can be governed through lighter-touch processes. Without this triage, governance resources are applied uniformly to a very heterogeneous population of AI systems, which means they are simultaneously over-applied to low-risk applications and under-applied to high-risk ones.

The fourth action is to build one governance process end to end before building many. Organisations that try to implement comprehensive AI governance frameworks across all systems simultaneously almost always produce governance theatre: documents that exist but do not shape decisions. Organisations that pick their highest-risk AI application, build a complete governance process for it, and then use that experience to develop the template for the rest are building something that actually works.

The fifth action is to connect AI governance to data governance explicitly and structurally, not just in principle documents. This means data governance teams are involved in AI system design from the start, training data sources are subject to the same quality and compliance standards as any other governed data asset, and the data provenance of every deployed model is documented and auditable.

Frequently Asked Questions

Q1. Why is AI transformation considered a problem of governance rather than technology?

AI transformation fails primarily because organisations do not have the structures to define accountability, manage risk, and align AI behaviour with organisational values at scale. According to multiple studies including Deloitte’s boardroom surveys, approximately 70 percent of enterprise AI projects fail not because the technology is insufficient but because governance gaps allow AI systems to operate without clear ownership, oversight, or accountability. Technology builds the capability. Governance determines whether that capability produces intended outcomes responsibly.

Q2. What is AI governance and what does it actually include?

AI governance is the set of structures, processes, and accountability mechanisms that define how AI systems are built, deployed, monitored, and retired within an organisation. It includes decision rights specifying who has authority over AI systems, risk classification frameworks determining what level of scrutiny each application requires, data governance standards ensuring training and operational data meets quality and compliance requirements, explainability and audit requirements for high-impact systems, incident response procedures, and board-level oversight mechanisms.

Q3. How does AI governance differ from AI compliance?

Compliance asks whether an organisation is meeting the rules that currently exist. Governance asks whether the organisation has built the structures to make responsible decisions in areas where rules are incomplete, evolving, or cannot be applied mechanically. AI sits almost entirely in the second category. A compliant organisation may still be ungoverned in practice if it meets regulatory minimums without having the internal structures to identify and respond to AI failures that fall outside regulatory scope.

Q4. What does shadow AI mean for AI governance?

Shadow AI refers to the use of AI tools by employees without organisational approval or oversight. Surveys from 2024 and 2025 consistently show that the majority of employees in large organisations use AI tools in ways their organisations have not sanctioned or are unaware of. This creates a governance problem because decisions that formally sit within the organisation’s governance structures are being partially shaped by AI inputs that exist outside those structures, creating systematic opacity in how consequential decisions are actually being made.

Q5. Does AI governance slow down innovation?

Evidence consistently shows the opposite. Organisations with mature AI governance frameworks deploy AI faster over meaningful timeframes than those without them, because governance eliminates the rework, regulatory penalties, and reputational damage that ungoverned deployments eventually produce. Governance also replaces indefinite review delays caused by unclear decision rights with predictable, bounded approval processes. The perception that governance slows innovation is based on comparing the speed of an ungoverned deployment with the speed of a governed one in isolation, ignoring the downstream costs of the ungoverned approach.

Q6. What role should the board play in AI governance?

The board’s role in AI governance is to provide strategic oversight, define the organisation’s AI risk appetite, ensure that governance structures exist and are functioning, and exercise accountability for the most consequential AI applications. The Deloitte Global Boardroom Survey found that 66 percent of boards have limited or no AI expertise. Boards do not need to become technically proficient in AI. They need sufficient literacy to ask meaningful questions about AI risk and to evaluate whether the answers they receive reflect genuine governance or governance theatre.

Q7. What is the EU AI Act and how does it affect AI governance requirements?

The EU AI Act is the most comprehensive binding AI regulatory framework currently in effect. It classifies AI applications by risk, prohibits certain applications, and establishes specific requirements for high-risk AI systems including conformity assessment, transparency obligations, audit trails, and human oversight mechanisms. Organisations operating in EU markets or deploying AI affecting EU citizens are subject to its requirements regardless of where they are headquartered. It is phasing in its requirements through 2026 and 2027 and represents the regulatory floor for AI governance in the EU market.

Q8. What is the most important first step in building AI governance?

Conducting an honest inventory of AI systems already in deployment is the most important first step. Most large organisations have significantly more AI operating than their governance structures are aware of, including shadow AI tools, vendor platforms with embedded AI components, and legacy algorithmic systems. You cannot govern what you cannot see. The inventory, followed by named ownership assignment to every identified system, produces more immediate governance impact than any framework document.

Q9. Why do AI governance frameworks often fail despite being formally adopted?

Formal governance frameworks fail when they are applied post-deployment rather than embedded in the design process, when incentive structures reward deployment speed over governance adherence, when technical and normative expertise remain siloed without functional bridges between them, and when governance processes apply principles uniformly to a heterogeneous population of AI systems rather than proportionately to their risk levels. Governance that exists in documents but does not shape actual decisions is governance in name only.

Q10. How should organisations represent the interests of people affected by AI in their governance structures?

Mature AI governance includes formal mechanisms for the interests of affected populations to influence governance decisions. This does not mean customers or employees design AI systems. It means their feedback is treated as governance input rather than customer service noise, their experience of AI-driven decisions is monitored as evidence of whether governance is working, and the governance framework explicitly asks whose interests the governance structures are designed to protect. Governance frameworks built primarily to protect the organisation from consequences of AI failure, rather than to protect the people AI affects, will eventually produce structures that serve the wrong interests.

Conclusion

The argument that AI transformation is a problem of governance is not a critique of artificial intelligence. It is a description of how transformative technologies consistently work. Every technology that has reshaped organisations at scale, from electricity to computing to the internet, has required governance infrastructure to deliver its potential sustainably. The organisations that built that infrastructure were the ones that extracted durable value. The ones that did not were the ones whose transformation stories ended in cautionary tales.

AI is not different in this respect. It is more urgent because its consequences are larger, faster, and harder to reverse. An algorithmic decision applied to a million people produces harm at a speed and scale that no previous enterprise technology could match. The governance required to prevent and respond to that harm needs to match the same speed and scale.

The good news, and it is genuine good news, is that the organisations getting this right are demonstrating that governance and innovation are not in tension. The companies with mature AI governance are deploying more AI, more consistently, with less rework and fewer crises than those treating governance as a tax on progress. The discipline of asking whether an AI system should be built, under what constraints, with what accountability structure, and for whose benefit, does not slow the building. It makes the building worth doing.

AI transformation is a problem of governance. Solving the governance problem is not the end of the transformation. It is the condition under which transformation becomes something worth the name.